Problem Set 1 (120 points)¶

Important information¶

We provide signatures of the functions that you have to implement. Make sure you follow the signatures defined, otherwise your coding solutions will not be graded.

Read homework rules carefully. If you do not follow it you will likely be penalized.

Problem 1 (Python demo) 40 pts¶

from scipy.linalg import toeplitz

import numpy as np

import math

import scipy.io.wavfile as wav

import matplotlib.pyplot as plt

from IPython.display import Audio

%matplotlib notebook

# reading

rate, audio = wav.read("TMaRdy00.wav")

# plotting

plt.plot(audio)

plt.ylabel("Amplitude")

plt.xlabel("Time")

plt.title("You wanna piece of me, boy?")

plt.show()

# playing

Audio(audio, rate=rate)

Our next goal is to process this signal by multiplying it by a special type of matrix (convolution operation) that will smooth the signal.

- (5 pts) Before processing this file let us estimate what size of matrix we can afford. Let $N$ be the size of the signal. Estimate analytically memory in megabytes required to store dense square matrix of size $N\times N$ to fit in your operation memory and print this number. Cut the signal so that you will not have swap (overflow of the operation memory). Note: Cut the signal by taking every p-th number in array:

signal[::p].

# N = ...

print(N)

- (5 pts) Write a function that outputs matrix $T$: $$T_{ij} = \sqrt{\frac{\alpha}{\pi}}e^{-\alpha (i-j)^2}, \quad i,j=1,\dots,N$$ as numpy array. Avoid using loops or lists! The function np.meshgrid will be helpful for this task. Note: matrices that depend only on difference of indices: $T_{ij} \equiv T_{i-j}$ are called Toeplitz. Toeplitz matrix-by-vector multiplication is convolution since it can be written as $$y_i = \sum_{j=1}^N T_{i-j} x_j.$$ Convolutions can be computed faster than $\mathcal{O}(N^2)$ complexity using Fast Fourier transform (will be covered later in our course, no need to implement it here).

def gen_toeplitz(N, alpha): return T

# INPUT: N - integer (positive), alpha - float (positive)

# OUTPUT: T - np.array (shape: NxN)

def gen_toeplitz(N, alpha):

# Your code is here

return T

Convolution (10 pts)¶

- (5 pts) Write a function

convolution(see below) that takes the signal you want to convolve and multiply it by Toeplitz matrix T (for matvec operations use @ symbol).

# INPUT: signal - np.array (shape: Nx1), N - int (positive), alpha - float (positive)

# OUTPUT: convolved_signal - np.array (shape: Nx1)

def convolution(signal, N, alpha):

# Your code is here

return convolved_signal

(3 pts) Plot the first $100$ points of the result and the first $100$ points of your signal on the same figure. Do the same plots for $\alpha = \frac{1}{5}$, $\alpha = \frac{1}{100}$ using

plt.subplotsin matplotlib. Each subplot should contain first $100$ points of initial and convolved signals for some $\alpha$. Make sure that you got results that look like smoothed initial signal.(2 pts) Play the resulting signal. In order to do so you should also scale the frequency (rate), which is one of the inputs in

Audio.

Note that you cannot play a signal which is too small.

# Your code is here

Deconvolution (20 pts)¶

Given a convolved signal $y$ and an initial signal $x$ our goal now is to recover $x$ by solving the system $$ y = Tx. $$ To do so we will run iterative process $$ x_{k+1} = x_{k} - \tau_k (Tx_k - y), \quad k=1,2,\dots $$ starting from zero vector $x_0$. There are different ways how to define parameters $\tau_k$. Different choices lead to different methods (e.g. Richardson iteration, Chebyshev iteration, etc.). This topic will be covered in details later in our course.

To get some intuition why this process converges to the solution of $Tx=y$, we can consider the following. Let us note that if $x_k$ converges to some limit $x$, then so does $x_{k+1}$. Taking $k\to \infty$ we arrive at $x = x - \tau (Tx - y)$ and hence $x$ is the solution of $Tx = y$.

Another important point is that iterative process requires only matrix-vector porducts $Tx_k$ on each iteration instead of the whole matrix. In this problem we, however, work with the full matrix, but keep in mind, that convolution can be done efficiently without storing the whole matrix.

- (5 pts) For each $k$ choose paremeter $\tau_k$ such that the residual $r_{k+1}=Tx_{k+1} - y$ is minimal possible (line search with search direction $r_k$): $$ \|Tx_{k+1} - y\|_2 \to \min_{\tau_k} $$ found analytically. The answer to this bullet is a derivation of $\tau_k$. The parameter $\tau_k$ should be expressed in terms of residuals $r_k = T x_k - y$.

# Your solution is here

- (10 pts) Write a function

iterativethat outputs accuracy –– a numpy array of relative errors $\big\{\frac{\|x_{k+1} - x\|_2}{\|x\|_2}\big\}$ afternum_iteriterations using $\tau_k$ from the previous task. Note: The only loop you are allowed to use here is a loop for $k$.

# INPUT: N - int (positive), alpha - float (positive), num_iter - integer (positive),

# y - np.array (shape: Nx1, convolved signal), s - np.array (shape: Nx1, original signal)

# OUTPUT: rel_error - np.array size (num_iter x 1)

def iterative(N, num_iter, y, s, alpha):

# Your code is here

return rel_error

- (2 pts) Set

num_iter=1000,x=s[::20]and do a convergence plot for $\alpha = \frac{1}{2}$ and $\alpha = \frac{1}{5}$.

# Your plots are here

- (3 pts) Set

x=s[::20],num_iter=1000and $\alpha=\frac{1}{5}$. Explain what happens with the convergence if you add small random noise of amplitude $10^{-3}\max(x)$ to $y$. The answer to this question should be an explanation supported by plots and/or tables.

# Your code is here

Problem 2 (Theoretical tasks) 45 pts¶

1.

- (5 pts) Prove that $\| U A \|_F = \| A U \|_F = \| A \|_F$ for any unitary matrix $U$.

- (5 pts) Prove that $\| Ux \|_2 = \| x \|_2$ for any $x$ iff $U$ is unitary.

- (5 pts) Prove that $\| U A \|_2 = \| A U \|_2 = \| A \|_2$ for any unitary $U$.

2.

- (5 pts) Using the results from the previous subproblem, prove that $\| A \|_F \le \sqrt{\mathrm{rank}(A)} \| A \|_2$. Hint: SVD will help you.

- (5 pts) Show that for any $m, n$ and $k \le \min(m, n)$ there exists $A \in \mathbb{R}^{m \times n}: \mathrm{rank}(A) = k$, such that $\| A \|_F = \sqrt{\mathrm{rank}(A)} \| A \|_2$. In other words, show that the previous inequality is strict.

- (5 pts) Prove that if $\mathrm{rank}(A) = 1$, then $\| A \|_F = \| A \|_2$.

- (5 pts) Prove that $\| A B \|_F \le \| A \|_2 \| B \|_F$.

3.

(3 pts) Differentiate with respect to $A$ the function $$ f(A) = \mathrm{sin}(x^\top A B C D x), $$ where $x$ is a vector and $A, B, C, D$ are square matrices.

(7 pts) Differentiate with respect to $y, A, X$ the function $$f(y, A, X) = \mathrm{tr}(\mathrm{diag}(y) A X),$$ where $y \in \mathbb{R}^n$ and $A, X \in \mathbb{R}^{n \times n}$. Here

$$ \mathrm{diag}(y)_{i, j} = \begin{cases} y_i, & \text{if}\ i = j \\ 0, & \text{otherwise} \end{cases} $$

# Your solution is here

Problem 3 (Strassen algorithm) 15 pts¶

1. (3 pts) Implement the naive algorithm for squared matrix multiplication with explicit “for” cycles.

def naive_multiplication(A, B):

"""

Implement naive matrix multiplication with explicit for cycles

Parameters: Matrices A, B

Returns: Matrix C = AB

"""

# Your code is here

return C

2. (7 pts) Implement the Strassen algorithm.

def strassen(A, B):

"""

Implement Strassen algorithm for matrix multiplication

Parameters: Matrices A, B

Returns: Matrix C = AB

"""

# Your code is here

return C

3. (5 pts) Compare three approaches: naive multiplication, Strassen algorithm and standard NumPy function.

Provide a plot in log-scale of dependence between the matrix size and the runtime of multiplication. You will have three lines, do not forget to add legend, axis labels and other attributes (see our requirements)

Consider the matrix size in the range of 100 to 700 with step 100, e.g. $n=100, 200,\ldots, 700$.

Justify the results theoretically (e.g., use the known formulas for total multiplication complexity of naive and Strassen algorithms).

# Your code is here

Problem 4 (SVD) 20 pts¶

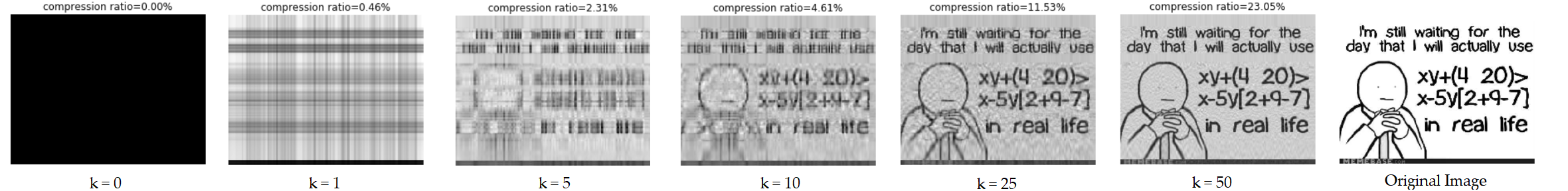

In this assignment you are supposed to study how SVD could be used in image compression.

1. (2 pts) Compute the singular values of some predownloaded image (via the code provided below) and plot them. Do not forget to use logarithmic scale.

%matplotlib inline

import matplotlib.pyplot as plt

from PIL import Image, ImageDraw

import requests

import numpy as np

url = 'https://pbs.twimg.com/profile_images/1658625695/my_photo_400x400.jpg' # Ivan

url = 'https://i.chzbgr.com/full/5536320768/h88BAB406/' # Insight

# url = '' # your favorite picture, please!

face_raw = Image.open(requests.get(url, stream=True).raw)

face = np.array(face_raw).astype(np.uint8)

plt.imshow(face_raw)

plt.xticks(())

plt.yticks(())

plt.title('Original Picture')

plt.show()

# Your code is here

2. (3 pts) Complete a function compress, that performs SVD and truncates it (using $k$ singular values/vectors). See the prototype below.

Note, that in colourful case you have to split your image to channels and work with matrices corresponding to different channels separately.

Plot approximate reconstructed image $M_\varepsilon$ of your favorite image such that $rank(M_\varepsilon) = 5, 20, 50$ using plt.subplots.

def compress(image, k):

"""

Perform svd decomposition and truncate it (using k singular values/vectors)

Parameters:

image (np.array): input image (probably, colourful)

k (int): approximation rank

--------

Returns:

reconst_matrix (np.array): reconstructed matrix (tensor in colourful case)

s (np.array): array of singular values

"""

# Your code is here

return reconst_matrix, s

# Your code is here

3. (3 pts) Plot the following two figures for your favorite picture

- How relative error of approximation depends on the rank of approximation?

- How compression rate in terms of storing information ((singular vectors + singular numbers) / total size of image) depends on the rank of approximation?

# Your code is here

4. (2 pts) Consider the following two pictures. Compute their approximations (with the same rank, or relative error). What do you see? Explain results.

url1 = 'http://sk.ru/resized-image.ashx/__size/550x0/__key/communityserver-blogs-components-weblogfiles/00-00-00-60-11/skoltech1.jpg'

url2 = 'http://www.simpsoncrazy.com/content/characters/poster/bottom-right.jpg'

image_raw1 = Image.open(requests.get(url1, stream=True).raw)

image_raw2 = Image.open(requests.get(url2, stream=True).raw)

image1 = np.array(image_raw1).astype(np.uint8)

image2 = np.array(image_raw2).astype(np.uint8)

plt.figure(figsize=(18, 6))

plt.subplot(1,2,1)

plt.imshow(image_raw1)

plt.title('One Picture')

plt.xticks(())

plt.yticks(())

plt.subplot(1,2,2)

plt.imshow(image_raw2)

plt.title('Another Picture')

plt.xticks(())

plt.yticks(())

plt.show()

# Your code is here

Problem 5 (Bonus)¶

The norm is called absolute if $\|x\|=\| \lvert x \lvert \|$ holds for any vector $x$, where $x=(x_1,\dots,x_n)^T$ and $\lvert x \lvert = (\lvert x_1 \lvert,\dots, \lvert x_n \lvert)^T$. Give an example of a norm which is not absolute.

Write a function

ranks_HOSVD(A, eps)that calculates Tucker ranks of a d-dimensional tensor $A$ using High-Order SVD (HOSVD) algorithm, whereepsis the relative accuracy in the Frobenius norm between the approximated and the initial tensors. Details can be found here on Figure 4.3.def ranks_HOSVD(A, eps): return r #r should be a tuple of ranks r = (r1, r2, ..., rd)